AI Insider Threat Tops Data Security Concerns

The AI insider threat is rapidly becoming a top concern for global enterprises. According to the Thales 2026 Data Threat Report, 61% of organizations now cite AI as their biggest data security risk. The research, conducted by S&P Global 451 Research, highlights how AI is shifting from a simple tool to a trusted insider with broad system access.

Organizations across automotive, energy, finance, and retail sectors are embedding AI into analytics, customer service, development pipelines, and decision-making workflows. However, as AI systems gain automated access to enterprise data, controls often lag behind. Consequently, the AI insider threat expands faster than traditional governance models can contain it.

Expanding Access Creates Visibility Gaps

As AI adoption accelerates, data visibility remains limited. The report reveals that only 34% of organizations know where all their data resides, while just 39% fully classify their information. Moreover, 47% of sensitive cloud data remains unencrypted.

Because AI systems ingest and act on massive datasets across cloud and SaaS environments, weak oversight increases exposure. If credentials are compromised, automated systems can amplify breaches at machine speed.

Identity infrastructure is now the primary attack surface. Credential theft remains the leading cloud attack technique, cited by 67% of affected organizations. Meanwhile, 50% rank secrets management among their top application security challenges. These findings reinforce how the AI insider threat is closely tied to identity governance and machine credential management.

AI-Powered Attacks Are Growing

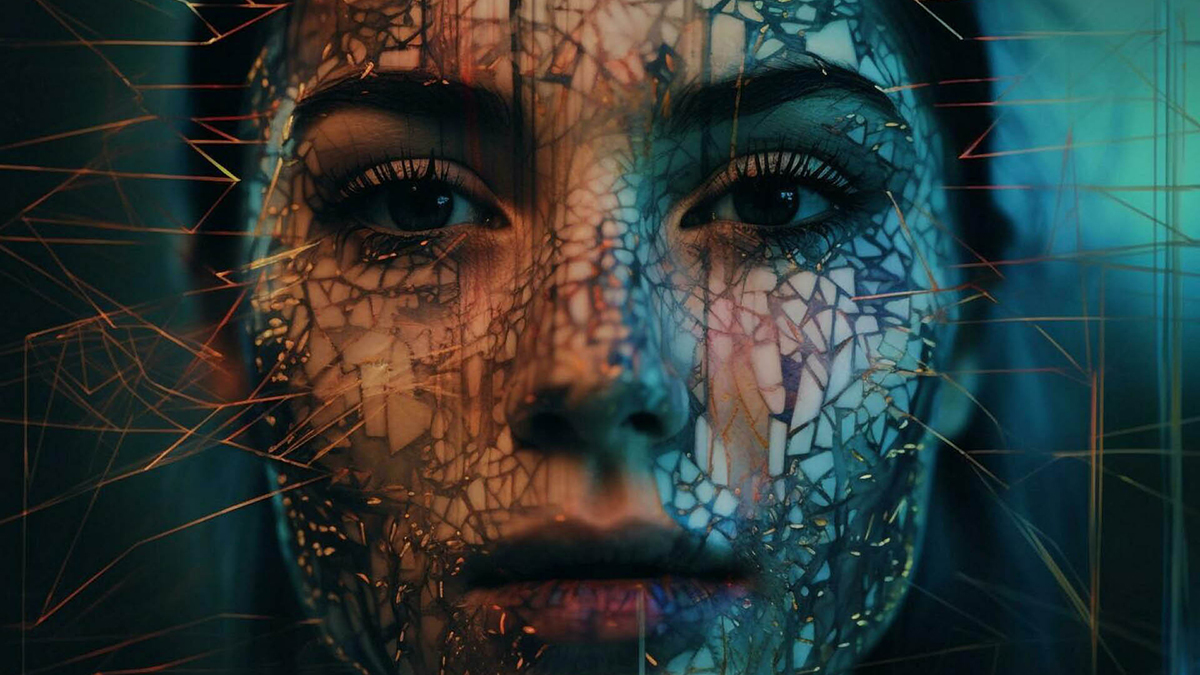

The AI insider threat does not exist in isolation. Attackers are also leveraging AI to scale deception and impersonation. Nearly 60% of companies report deepfake-driven attacks, while 48% have experienced reputational damage from AI-generated misinformation.

Furthermore, human error contributes to 28% of breaches. When automation layers on top of existing weaknesses, small mistakes can escalate rapidly. Therefore, AI can magnify both internal misconfigurations and external threats.

Security Investment Lags Behind AI Expansion

Although awareness is growing, investment is uneven. About 30% of organizations now allocate dedicated budgets to AI security. However, 53% still rely on traditional, perimeter-based security models designed primarily for human users.

As AI systems increasingly authenticate, access, and act autonomously, legacy controls prove insufficient. Continuous data visibility, encryption, and adaptive identity governance must become foundational components of enterprise infrastructure.

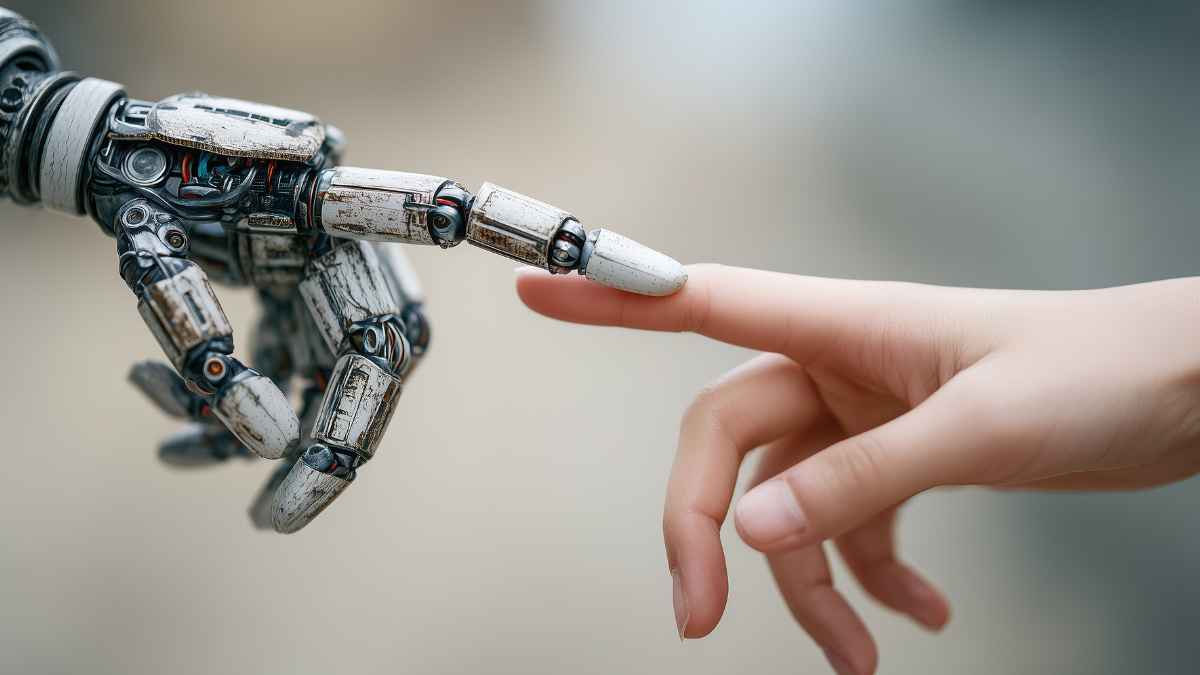

Rethinking Trust in the Age of AI

The AI insider threat highlights a broader shift in enterprise security strategy. AI is not replacing traditional risks; instead, it accelerates them. Speed, scale, and automation transform minor vulnerabilities into systemic exposure.

To innovate securely, organizations must treat data protection, encryption, and identity governance as core enablers of AI adoption. Those that embed governance directly into their AI strategies will reduce risk while maintaining agility. In contrast, enterprises that overlook these controls risk turning AI into their most powerful insider.

Read Also: Snowflake Expands Cortex Code CLI to Support dbt and Apache Airflow